This winter has seen unprecedented levels of across Europe and the US. In particular, the UK experienced some of the coldest December temperatures on record, with snow and ice causing many airports to close.

Indeed, George Osborne, the UK's Chancellor of the Exchequer, attributed the country's declining economy in the last quarter of 2010 to this .

A perfectly sensible question to ask is whether this type of weather will become more likely under climate change? The trouble is we do not know the answer with any great confidence.

The key point is that the cold weather was not associated with some 'global cooling' but with an anomalous circulation pattern that brought Arctic air to the UK and other parts of Europe.

This very same circulation pattern also brought warm temperatures to parts of Canada and south-east Europe. Global mean temperatures were barely affected.

Detailed, 100-year predictions not yet possible

Weather-forecast models, which only have to predict a few days ahead at a time, are able to represent this level of detail very well. Global climate models, however, such as those used in the fourth assessment report by the (IPCC) to predict weather systems 100 years or more ahead of time, do not work as well.

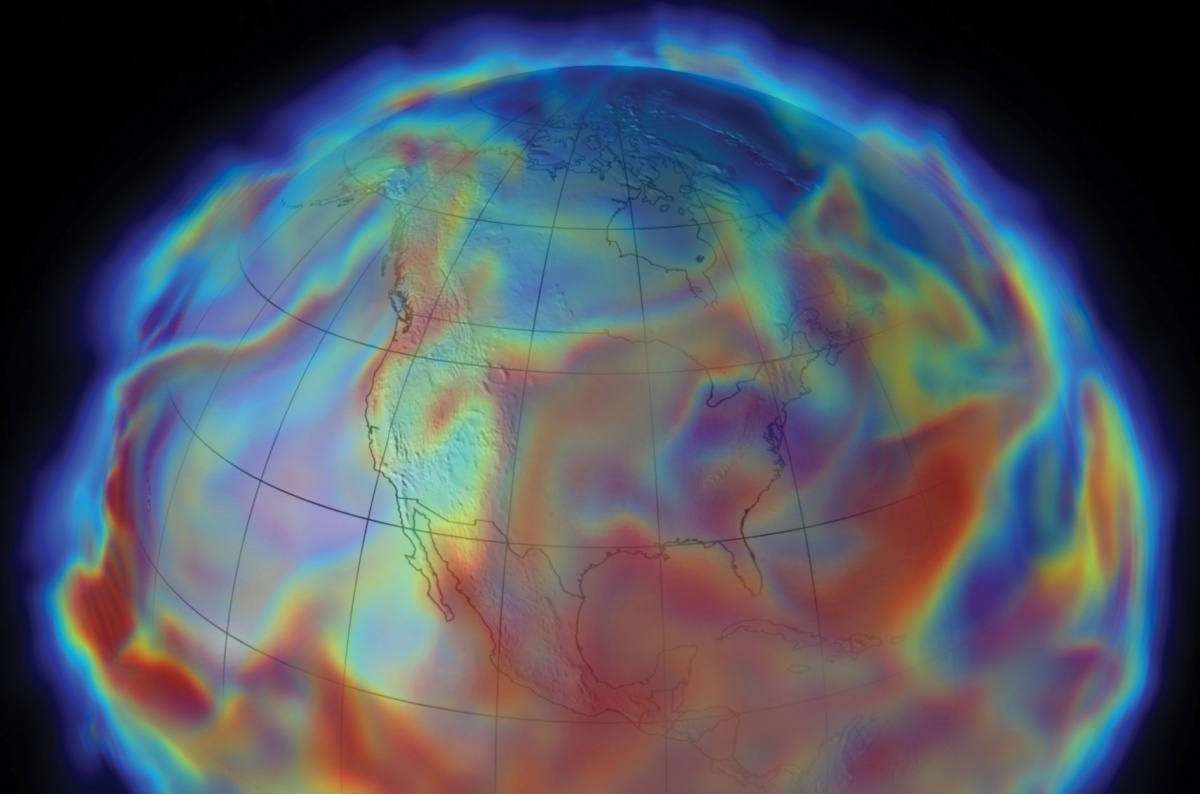

The problem is that simulating these weather patterns in comprehensive numerical models - also known as 'simulators' - requires a fine grid-point spacing of a few tens of kilometers or less. The IPCC models typically have a grid spacing of hundreds of kilometers and so such climate simulators cannot reliably assess whether this type of weather pattern - cold in Europe, warm elsewhere - will become more or less likely with increased atmospheric greenhouse gas concentrations.

Uncertainties from lack of computing power

Unfortunately, this is but one example of the many uncertainties about regional climate change that are exacerbated by a lack of resolution in climate simulators. Even when considering increases in global mean temperatures, we cannot be sure whether climate change will be a catastrophe for humanity or something we can live with and adapt to.

This uncertainty arises not because we do not know the relevant physics of the problem, but rather because we do not have the computing power to solve the known partial-differential equations of climate science with sufficient accuracy.

Climate science is a tough problem: there is none tougher in computational science.

The fact, climate equations are so difficult to solve is exemplified by the fact that solutions to climate equations, in particular, the (the mathematical description of the motion of fluid substances), are still unproven. Solving this 'existence' problem is one of the Clay Mathematics Institute's " problems", right up there with the , as a key unsolved mathematical problem. Climate science is a tough problem: there is none tougher in computational science.

Increasing resolution of climate models is too expensive

Today, computing needs of climate modellers are not being met. One reason is increasing the resolution of models is computationally expensive: halving the grid spacing, say from 1 km to 500 meters, can increase computational costs by up to a factor of 16.

Moreover, national climate-prediction institutes, such as the in the UK, have many other demands on their computing resources. They need to develop numerical algorithms that can simulate not only fluid dynamics; but, also relevant chemistry and biology of our planet's carbon cycle. Finally, they need to, not only run the climate simulators centuries into the future, but also on 1000-year integrations over past climatic periods.

However, UK high-performance computers, used exclusively for meteorological and climate research barely make the list of the world's top 50 most powerful computers. The goal here is not a million-dollar maths prize, but rather confidence about the trillion-dollar-plus implications of climate change. A more accurate assessment of the real level of threat posed by climate change is crucial, not only to help to break the current stalemate in mitigation talks, but, also investing wisely in new infrastructures to adapt to climate change.

We certainly need much more accurate simulators of climate than we currently have to tackle the issue of climate geo-engineering, which involves manipulating the Earth's climate to counteract the effects of global warming. We should be doing all that is humanly possible to ensure these goals are achievable.

Calling for collaboration

Climate change is a global problem that requires global solutions. Humanity has repeatedly shown that it is more than able to step up a gear in technical and scientific achievement when the will is there. For example, countries have come together to build technological marvels unachievable at national scales. In Europe, the fusion facility, the many space missions built by the , and at near Geneva. These facilities have come about because national budgets have been insufficient to tackle key problems in space physics, particle physics or fusion research.

It is time to start planning for a truly international climate-prediction facility, on a scale such as ITER or CERN. It would allow the sort of research experimentation currently impossible to current research centers. Such a facility would allow the dedicated use of cutting-edge exascale (1018 operations per second) technology for understanding and predicting climate, for the benefit of global society, as soon as this technology becomes available in a few years' time. Not a number 50 machine for a number 50 problem, but a number 1 machine for a number 1 problem. It is time to step up a gear if we really want to understand the nature of this climate threat.

This is an edited version of an article first published in the March 2011 issue of .